Code-Free Prototypes

Earlier on we saw how human behavioral observation can help you understand your problem domain and define a better solution, all without having written a single line of code. However, eventually you will be tasked with trying out various solutions with users, and in this case you do not need to write code either! In fact, user testing should not wait on engineering, but act alongside it in parallel. There are two classes of user testing related to AI technology, each with different methodologies and considerations.

Wizard of Oz

The Wizard of Oz (WoZ) methodology allows you to test the dynamics of your AI system cheaply and quickly, without the long development cycles of engineering. A WoZ study involves a person or group of people (the ‘Wizard’) imitating an AI’s decision-making capabilities in order to evaluate that AI in situ. Subjects may be kept unaware of this fact, but do not have to be. The goal of a WoZ study is not to test whether the subject identifies the ‘AI’ as a person, but to identify whether a reasonably intelligent system could interpret data and interface with the subject successfully. To this end, the people imitating the AI must have strong limitations on what data is available to them. You must limit the data to what your system will have access to—e.g. no browser windows or search queries. Often, the WoZ study only needs to involve a person with access to a spreadsheet, sitting in another room. Also be very careful to constrain the wizard’s use of open-ended responses in cases such as natural language. One method is to only allow the wizard to access lines from a pre-written script.

Even Lower Fidelity Prototyping

When testing your product with a WoZ study, you will tend to quickly recognize the shortcomings of your system, if any arise. For example, if the user requires additional context, they may ask for more information or get confused (see Transparency). It may turn out that your user needs guidance rather than an automated decision (see Augmentation). If the WoZ study reveals that your ‘wizard’ is incapable of assisting the user (e.g. that the excel spreadsheet does not provide enough information), your team may want to engineer an altered product for further WoZ testing. This small effort may eventually contribute to the full product, and may de-risk your project while providing your team with valuable insights.

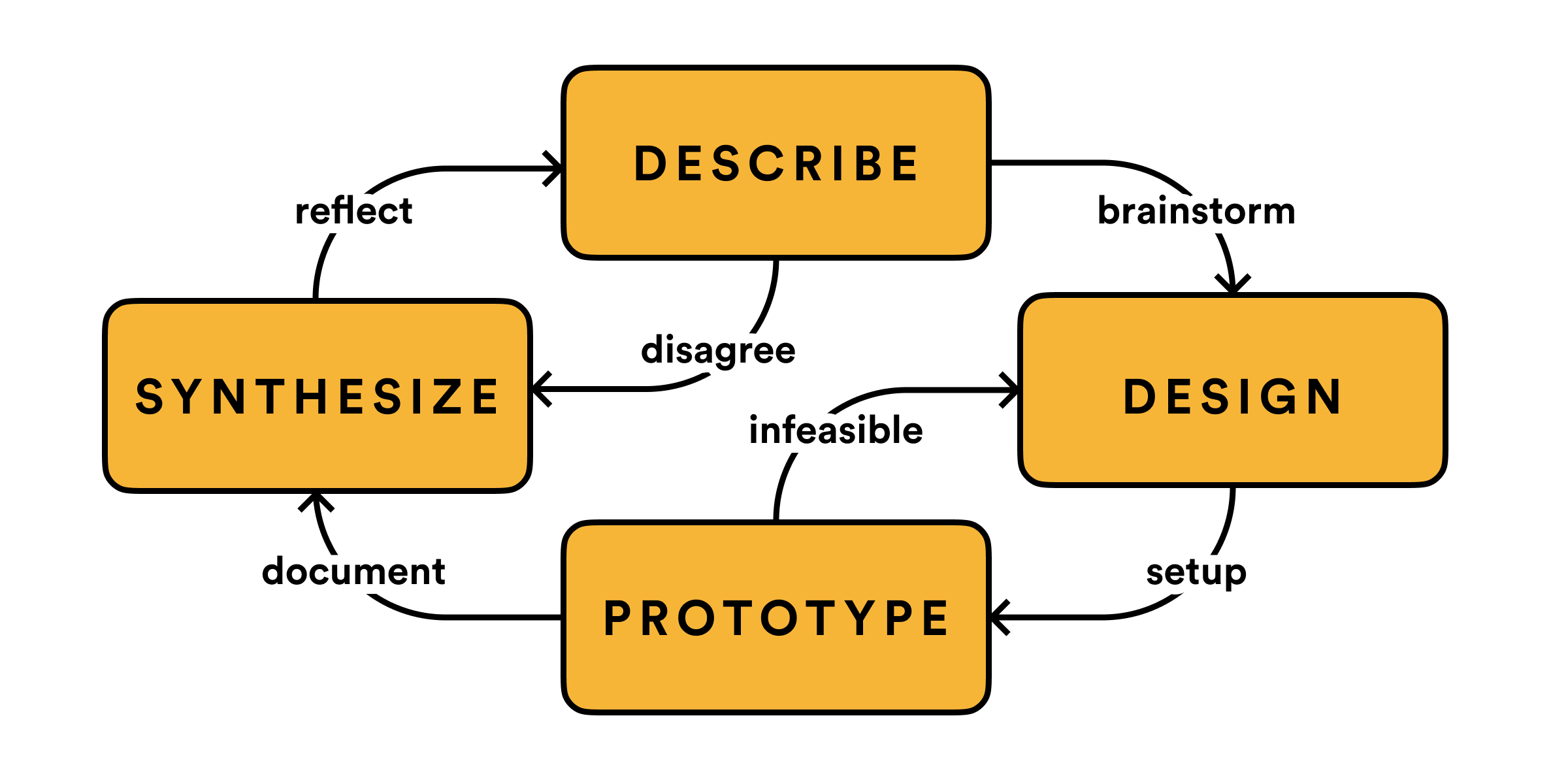

Iterative Design

It is similarly important to test early versions of your AI system, despite the potential inaccuracies of an imperfect model. Obviously in certain high-risk situations such as medicine this may not be possible, however, in most situations a reduced accuracy will actually improve the quality of insights in user studies.

All AI systems make mistakes (see Errata). Rather than attempting to eliminate error cases, use your iterative design process to understand failure modes and design for them. You should seek to understand failure cases in two ways. First, to identify whether an ‘escalation’ or executive judgment is required. In certain cases such as fraud detection, a user that attempts to correct an erroneous fraud label needs a streamlined way to do so, as these exceptional cases can be highly stressful! Second, you can use iterative design to better understand your own criterion of success. Many problems don’t have a binary true-false answer. Sometimes, in cases such as recommendation, quality follows a gradient from terrible to excellent. Try to find whether there’s a ‘step function’ where results attain a sufficient quality to become useful. This is also an excellent time to study user interpretability (see Intuition). Are users able to make helpful judgments about your system? Will they give the right kind of feedback, if necessary?